In recent years, there has been a significant advancement in the field of Artificial Intelligence (AI) and Augmented Reality (AR). These technologies have become increasingly popular and have the potential to enhance virtual experiences in various fields such as gaming, education, healthcare, and...

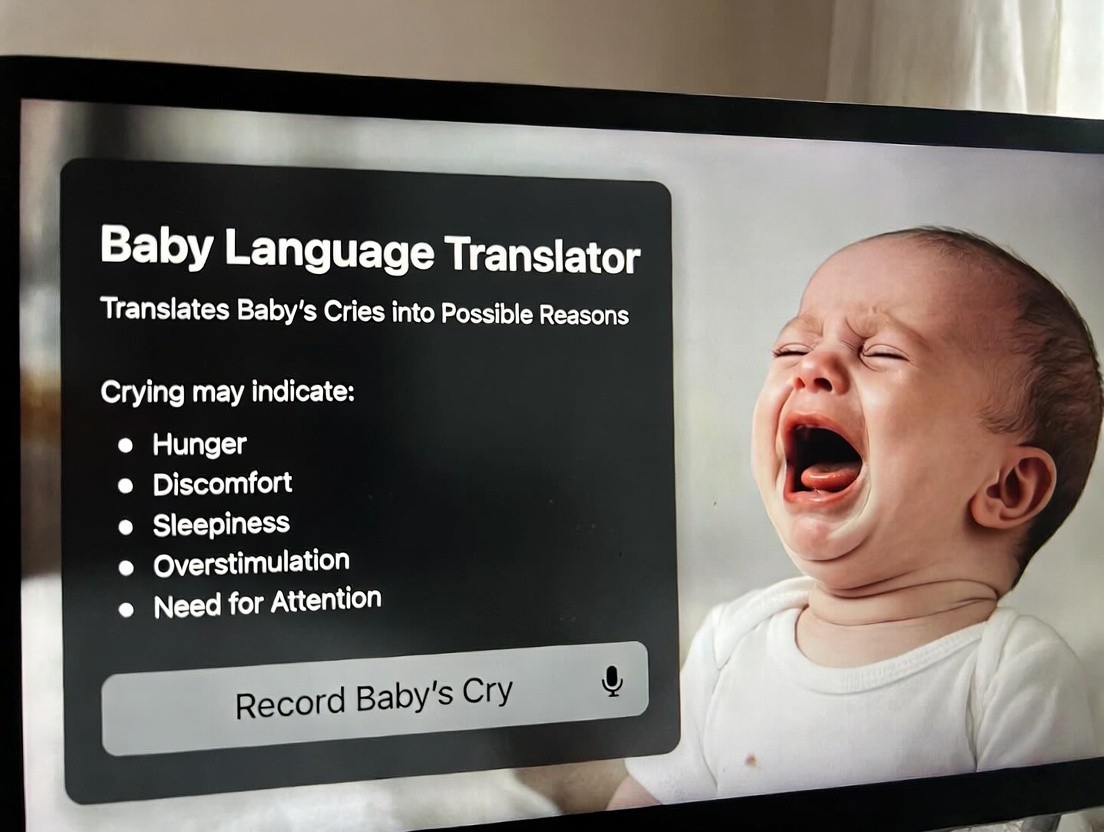

Program Translates Baby Language and Explains Crying Reasons

Understanding Infant Communication

Newborns cannot speak but communicate through cries, coos, and body language. Parents struggle to interpret these signals, often guessing whether their infant needs feeding, comfort, sleep, or medical attention. This uncertainty causes parental stress and can delay proper response to genuine needs. Advanced artificial intelligence now offers unprecedented insight into baby communication.

How Baby Language Translation Works

Acoustic Analysis Technology

The AI program analyzes acoustic properties of baby sounds including frequency, intensity, duration, and patterns. Infants produce different cries with distinct characteristics: hunger cries differ acoustically from pain cries, which differ from tiredness cries. The algorithm recognizes these differences with remarkable accuracy.

Training Data Foundation

Developers trained the system on thousands of recorded baby sounds with documented causes. Machine learning algorithms identified patterns correlating specific acoustic characteristics with underlying infant needs. The system learned to recognize subtle variations that human listeners cannot distinguish.

Identifying Specific Infant Needs

Cry Classification System

- Hunger cries: rhythmic patterns with specific frequency ranges

- Discomfort cries: higher intensity with irregular patterns

- Tiredness cries: lower frequency with whimpering characteristics

- Pain cries: sharp onset with sustained intensity

- Overstimulation cries: rapid intensity fluctuations

- Attention-seeking cries: intermittent patterns with pauses

- Illness indicators: unusual tones suggesting medical concern

Application and User Interface

Mobile and Device Integration

Parents use smartphone applications or wearable devices to record baby sounds. The AI analyzes the recording in real-time, typically within seconds, providing detailed feedback about the infant's likely needs. The interface displays confidence levels for different interpretations, helping parents make informed decisions.

Contextual Information

The most effective versions incorporate contextual data including feeding schedules, sleep patterns, recent diaper changes, and room temperature. This additional information improves accuracy significantly, as babies' needs follow predictable patterns based on time elapsed since last feeding or sleep.

Benefits for Parents and Caregivers

Stress Reduction

Parents of newborns experience tremendous stress and sleep deprivation. Understanding what their baby needs reduces anxiety and enables faster, more appropriate responses. Parents report feeling more confident and capable when equipped with data about their infant's communication.

Improved Infant Care

When parents understand their baby's needs accurately, they respond more effectively. Infants receive faster comfort when distressed, leading to shorter crying episodes and better sleep for the entire family. Some studies suggest this technology may reduce postpartum depression incidence.

Medical Applications

Early Illness Detection

The algorithm identifies cries suggesting medical concerns requiring professional evaluation. Subtle acoustic changes can indicate infections, reflux disease, or other conditions. Healthcare providers using this technology can identify sick infants earlier, improving outcomes substantially.

Development Monitoring

Normal infant communication patterns change as babies develop. The AI tracks these changes, alerting caregivers to developmental delays or concerns. This early detection enables early intervention, maximizing developmental potential.

Limitations and Considerations

The technology works best with healthy, term infants. Premature or medically complex infants may produce atypical sounds, reducing accuracy. Additionally, individual babies develop unique crying patterns that require calibration time. The system functions best as a supplement to parental instinct and professional medical advice rather than a replacement.

Future Enhancements

Next-generation systems will incorporate video analysis of body language alongside acoustic data. Integration with smart home systems will enable automatic responses—adjusting temperature if the baby is cold, or alerting parents if distress persists. Personalization will improve as the system learns each baby's unique communication style.

Conclusion

Baby language translation technology represents a significant advancement in infant care. By translating crying and other sounds into actionable information, the program helps parents respond more effectively to their children's needs. This technology promises to reduce parental stress while improving infant outcomes and development.